So, if our object has the key “foo/bar/test.json” the console will show a “folder” foo that contains a “folder” bar which contains the actual object. However, if we use a “/” in our object key, the AWS S3 console will render the object as if it was in a folder. As written above, an object store does not use directories or folders but just keys. Key Delimiterīy default, the “/” character gets special treatment if used in an object key. They are identified by a key which is a sequence of Unicode characters whose UTF-8 encoding is at most 1,024 bytes long. Objects are the actual things we are storing in S3. An important thing to note here is that S3 requires the name of the bucket to be globally unique. Bucketsīuckets are containers of objects we want to store. Since then, a lot of features have been added but the core concepts of S3 are still Buckets and Objects. S3 was one of the first services offered by AWS in 2006.

Additionally, we can provide metadata (data about data) that we attach to the object to further enrich it. So we might ask ourselves, how is an object store different from a file store? Without going into the gory details, an object store is a repository that stores objects in a flat structure, similar to a key-value store.Īs opposed to file-based storage where we have a hierarchy of files inside folders, inside folders,… the only thing we need to get an item out of an object store is the key of the object we want to retrieve. It is technically possible to store those binary files in Postgres but object stores like S3 might be better suited for storing unstructured data. But what if we want to store more than just plain data? What if we want to store a picture, a PDF, a document, or a video? Postgres is a relational database, very well suited for storing structured data that has a schema that won’t change too much over its lifetime (e.g. You are probably familiar with databases (of any kind). S3 stands for “simple storage service” and is an object store service hosted on Amazon Web Services (AWS) - but what does this exactly mean? This article is accompanied by a working code example on GitHub. If you want to go deeper and learn how to deploy a Spring Boot application to the AWS cloud and how to connect it to cloud services like RDS, Cognito, and SQS, make sure to check out the book Stratospheric - From Zero to Production with Spring Boot and AWS! Example Code You should be good to go now to take screenshots and upload them to S3.This article gives only a first impression of what you can do with AWS. Test settings to make sure you’re good.If you’re going to use a custom subdomain, enter that in the Base URL.If you want to change the path (a sub-folder), go ahead and enter that.Click Refresh to refresh your bucket list, and then select the bucket you created in the S3 console.Enter your Access Key ID and Secret Access Key you got before in the S3 step.In the left side list, click “Amazon S3.” Go ahead and click the “default” button near the top to make sure this is always the default account for your uploads.What we want to do now is set up the Account tab. You can change things like the filename format (in “Advanced”). If you haven’t played around in here, I recommend you do so. Keep in mind, your changes may take a while to propagate. Essentially though, you want to create a CNAME record for your subdomain (or domain if you’re not using a sub) that points to “s3.” In my case I created a CNAME “s” that points to S3. I’m not going to go into too much detail here as every DNS provider is a little different. You can select the region that works best for you (I selected “US Standard.” That should be it for the S3 side. NOTE: If you want to use a custom domain (ie s.), you must enter that as the bucket name. Click “Create Bucket” and in the modal, enter your preferred bucket name. Be sure to copy/paste these as you won’t be able to see them once you close the modal (and will need them later). Click “Manage Access Keys” to create a key.

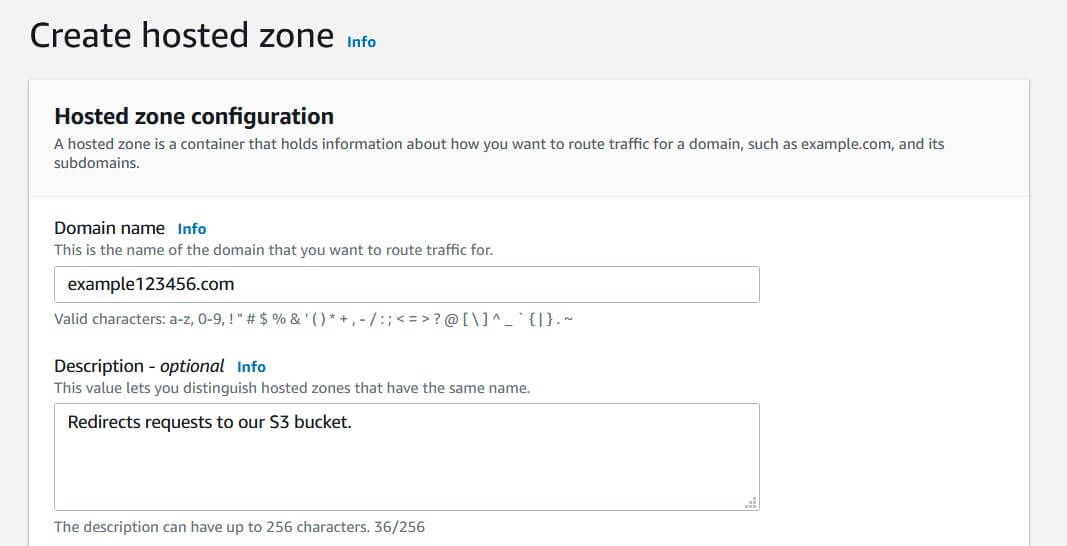

Just below the permissions section, is a “Security Credentials” section. Next up is giving the user an Access Key. In the “Permissions” section, click “Attach User Policy.” Search for “AmazonS3FullAccess” in the “Select Policy Template” list and click Select. To give an existing user access to manage your S3 buckets, go to IAM -> Users and select the user to edit. You may want to create a separate user for Monosnap to use, and give it full access to manage S3. I’m assuming you’ve already created an Amazon AWS account and are in general familiar with how to navigate the console. Monosnap has a built-in S3 integration which is pretty easy to set up. This was fine and dandy, but still had some annoyances, like the link taking a bit to copy. Originally, I had Monosnap saving to a folder on my Dropbox account.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed